Kippa - COMS 6733, 3D Photography

ĪĪ

Full Body 3D Scanning

| Sam Calabrese | Abhishek Gandhi | Changyin Zhou |

| smc2171@columbia.edu | asg2160@columbia.edu | changyin@cs.columbia.edu |

ĪĪ

[Proposal] [Timeline] [References] [Thanks]

[Report Download] [Data Download] [Animations Download] [Presentation Slides]

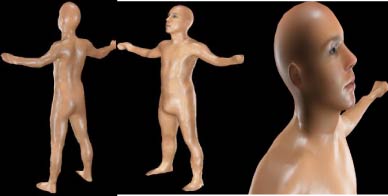

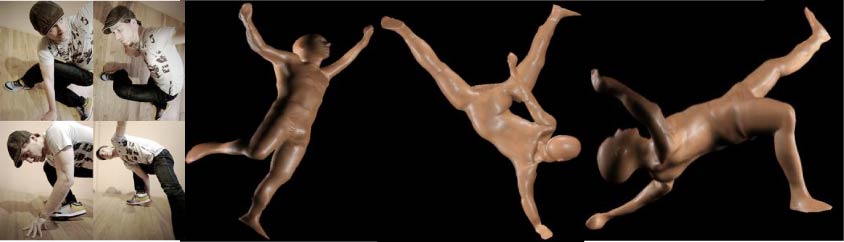

In this project, we are going to model a live human by taking advantage of both image-based method and laser scanner method. For the head, we plan on using a program called Facegen, which takes 3 images of the face and using the inherent symmetry of the human face creates an accurate model of the face and an estimated model of the head. For the body we will use the Leica 3D range scanner to get accurate 3D model data of the body. Then using software methods, for example 3dsmax, we will attach the head to the body. Since texture is not incredibly important, as our model will not be fully clothed since the data will not be accurate, we will import the model into a 3d software program and provide skin and clothing and the texture for the face is automatically provided from Facegen. Then our model will be imported as a model into an augmented reality where using a webcam and a marker, turning the marker will turn the model.

Our project presents some interesting technical challenges. For example, one major technical challenge is getting the person who is being scanned to stay in the same position relative to themselves, for example their arms shouldnĪ»t move up or down. Also the number of scans needs to be reduced because of the person, so that the 3d data will not be as good as a statue where you have the benefit of doing the scans over multiple days where none of the dimensions or orientations will change. Finally texture mapping, clothing, and animating the model is a difficult challenge, as well is putting it in the augmented reality.

While 3D scanning of human models is used commercially for movies and video games, many use either known pattern projection or image based models that although are easier on the person, do not produce perfect results. Or others use dangerous lasers that if you are not careful will hurt the person. Our method combines both of these to produce our model, which will be both a better model and more comfortable for our subject.

Timeline and project milestones

ĪĪ

2007 Oct. 23 Meeting at CEPSR 6LW3 - Decide the project

We mainly discussed the choice of our final project. We have three proposals. One is to create a 3D model of the full body by combining range scanner and image-based method, and then do animation; two is a new method, using a cone-shaped shaped mirror to obtain 3D model and panorama image of an object in one scan; and three is to scan the Lion Statue.

We finally chose the first one for several reasons:

1. It is challenging. How to reduce the movement of human during the scanning? How to handle the slight distortions during the registration? How to merge together the two meshes of head and body?

2. It is meaningful. Lots of people are interested in getting an accurate model of their own full bodies. We can further do interesting animation based on the models. It makes this proposal more attractive.

3. Proposal 2 is more like a research topic. It is difficult to predict the progress.

4. Proposal 3, compared with 1, 2, seems lack of novelty and fun.

ĪĪ

2007 Oct. 29 Meeting at CEPSR 7LW1 - Draft plan and schedule

How to reduce the body movement during the long time scanning is an important problem in our project. In this meeting, we decided to give up the previously proposed "turntable" solution for two considerations. Firstly, it is difficult for the model to stand still and keep at the same pose after rotation. Secondly, the model has to rest during the long scanning time, but "turnable" is not suitable for the model to remember the original pose.

We proposed to mark the ground to remember the positions of the feet, use two pillars to rest/fix the arms, and use an additional camera to keep track of the position along the whole scanning.

A draft schedule was worked out, which includes, find a model, do measurement, set up the environment (a large room, two pillars, body stickers, and ground marks), scan/take photos, registration, merge, animation and then reality augment (option).

Then, we set out to find the model.

ĪĪ

2007 Oct. 29 - Nov.18 Find a Model

We want the model to be professional so that he/she can stand still and remember the pose for a long time. Many different ways were tried in the following weeks. Sam posted an Ad on Craiglist and got lots of responses, from which we find our final model, a professional dancer and model. Totally, it took us about two+ weeks to find the model and make scanning appointment with him.

ĪĪ

2007 Nov. 19 Data Capture at CEPSR 6LW3 19:00-12:00pm

|

18:00 - 19:30 Setup the scanning environment, which includes: 1. Reserve a close/private large room 2. Two tripods to rest/fix the arms 3. Measure the space and decide four best angles to scan 4. Place and name the targets 5. Practice scanner setting up and movement 6. Do test scanning before the model comes 7. Decide the scan precision 8. Set the scanner at the same height of the shoulders ĪĪ |

|

|

|

|

|

|

ĪĪ

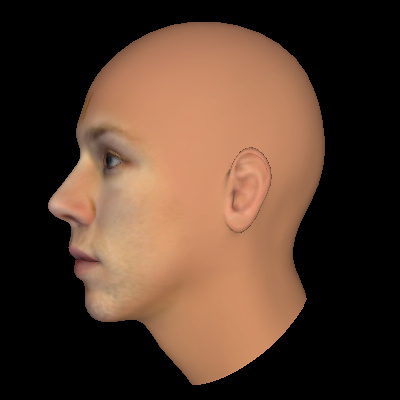

| 20:00 pm Take photos for the head modeling | ||

| ĪĪ | ||

|

|

|

|

|

Side Image (Left) |

Frontal Image |

Side Image (Right) |

| ĪĪ | ||

| These three photos will be input to Facegen to generate the 3D head model: [Download WRL file] | ||

| ĪĪ | ||

|

|

ĪĪ |

ĪĪ |

| ĪĪ | ĪĪ | ĪĪ |

ĪĪ

|

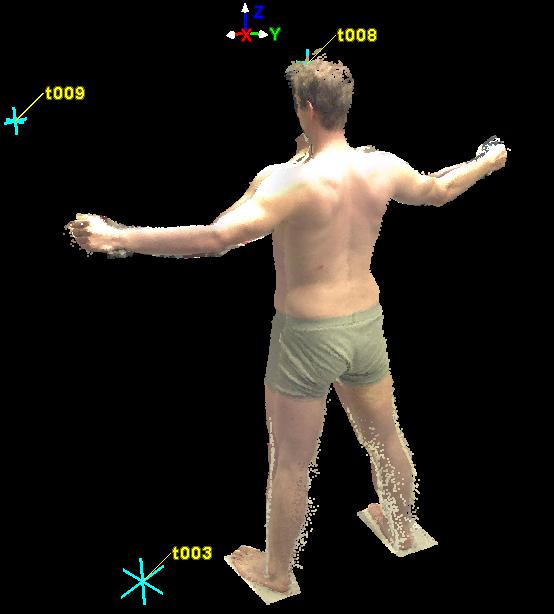

20:30 - 12:00 Body Scanning |

||

|

|

|

|

|

Totally 4 scans (45o Right/Left x Front/Back) were done in four hours. We use ground marks, tripods and camera to help the model keep still and at the same position. |

||

|

|

|

|

| At the begin of the first scan | At the end of the first scan | In the middle of second scan |

ĪĪ

2007 Nov. 21 Registration at CEPSR 6LW3: A Data Preview and First Registration attempt

Every scan is nice. But as expected, we observed some holes at the top/bottom of arms and legs. The body moved forward or backward a little bit during the scans, and so did the arms. We need non-rigid 3D registration, so we save the data and will try to do a better registration with vrip.

View 1

View 2

ĪĪ

2007 Nov. 22 - Dec 5, Data Processing

1. Convert the scan data to PLY files [Ply File Download]

We spent a lot time to find a method to export the data from Cyclone to Vrip. Finally, Sam found one software called Meshlab. We export the scan data to PTX file from Cyclone, use Meshlab to re-oriented the four surfaces, and then save it to Ply files.

[Meshlab is really strong and helps a lot in our project]

2. Re-register four scans with Scanalyze

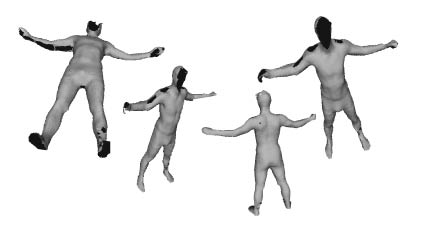

3. Create the surfaces using Vrip

4. Simplify the model using Plycrunch (we can also use another tool called QSlim)

5. Clean the model, do remeshing using Meshlab, and save to Obj file

6. Fill the holes using 3Ds Max, do further cleaning, attach the head and set texture

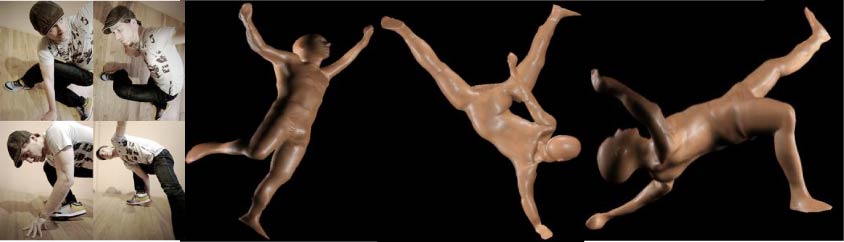

7. Compare our result with other commercial systems (AvatarMe)

8. Create Animation using 3Ds Max

ĪĪ

References:

-

ALLEN, P., 2007. 3d photography 2007 fall. Class notes on Active 3D Sensing.

-

ALOIMONOS, Y., AND SPETSAKIS, M. 1989. A unified theory of structure from motion.

-

BERALDIN, J., BLAIS, F., COURNOYER, L., GODIN, G., AND RIOUX, M. 2000. Active 3D Sensing. Modelli E Metodi per lo studio e la conservazione dellĪ»architettura storica, 22©C46.

-

BLAIS, F., PICARD, M., AND GODIN, G. 2004. Accurate 3d acquisition of freely moving objects. In 3DPVT04, 422©C429.

-

BLAIS, F. 2004. Review of 20 years of range sensor development. Journal of Electronic Imaging 13, 231.

-

DHOND, U., AND AGGARWAL, J. 1989. Structure from stereo-a review. Systems, Man and Cybernetics, IEEE Transactions on 19, 6, 1489©C1510.

-

DU, H., ZOU, D., AND CHEN, Y. Q. 2007. Relative epipolar motion of tracked features for correspondence in binocular stereo. In IEEE International Conference on Computer Vision (ICCV).

-

NAYAR, S., WATANABE, M., AND NOGUCHI, M. 1996. Realtime focus range sensor. IEEE Transactions on Pattern Analysis and Machine Intelligence 18, 12, 1186©C1198.

-

RUSINKIEWICZ, S., HALL-HOLT, O., AND LEVOY, M. 2002. Real-time 3D model acquisition. Proceedings of the 29th annual conference on Computer graphics and interactive techniques, 438©C446.

-

SCHECHNER, Y., AND KIRYATI, N. 2000. Depth from Defocus vs. Stereo: How Different Really Are They? International Journal of Computer Vision 39, 2, 141©C162.

-

WATANABE, M., AND NAYAR, S. 1998. Rational Filters for Passive Depth from Defocus. International Journal of Computer Vision 27, 3, 203©C225.

-

ZHANG, R., TSAI, P., CRYER, J., AND SHAH, M. 1999. Shape from shading: A survey. IEEE Transactions on Pattern Analysis and Machine Intelligence 21, 8, 690©C706.

ĪĪ

Software or tools:

Facegen, MeshLab, Scanalyze, Plycrunch, VRip, QSlim, and 3Ds Max

ĪĪ

Several existing commercial systems for 3D Human modelling:

TC2 : http://www.tc2.com/what/bodyscan/index.html

Cornel Univ. Body Scan : http://www.bodyscan.human.cornell.edu/scene0037.html

Headus : http://www.headus.com/au

Prometheus : http://personal.ee.surrey.ac.uk/Personal/A.Hilton/research/PrometheusResults/index.html

Thanks to Prof. Allen, Karan, Matei and Paul for your kindly help and support.

Special thanks to our model, Daniel, for his professional, passion and great cooperation.

ĪĪ

ĪĪ

ĪĪ

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)