Beau (Bo) Sun,

Ph.D.

| 450 Computer Science Bldg Columbia University New York, New York 10027 |

Email: bosun@cs.columbia.edu |

It is difficult to say what is impossible, for the dream of yesterday is the hope of today and the reality of tomorrow. --Robert H. Goddard

New: Journal paper "Affine Double and Triple Wavelet Product Integrals for Rendering" accepted by TOG. The full paper will be posted soon.

EDUCATION

COLUMBIA UNIVERSITY

Ph.D. Candidate and Master in Computer Science, Master of Philosophy

Presidential Distinguished Fellow,

GPA: 4.0/4.0

Advisors: Prof. Ravi Ramamoorthi, T.C. Chang Chaired Prof. Shree K. Nayar

CHU KECHEN HONORS COLLEGE, ZHEJIANG UNIVERSITY

Bachelor in Computer Science

Chu Kechen Outstanding Fellow,

GPA: 3.94/4.0, No. 1

National Math and National Physics Olympiad First Prize Winners

Advisor: University President & Prof. Yunhe Pan

SIGGRAPH PUBLICATIONS (not inlcuding 2008 submissions)

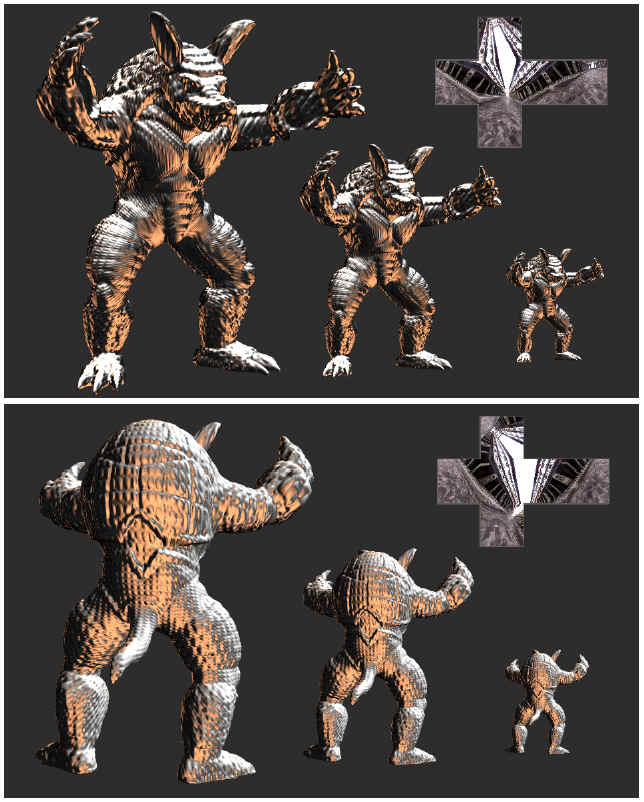

Frequency Domain Normal Map Filtering

Charles Han, Bo Sun, Ravi Ramamoorthi, and Eitan Grinspun

ACM SIGGRAPH 2007

[paper] [video: online, download] [shader] [trailer] [slides]

Abstract: Filtering is critical for representing image-based detail, such as textures or normal maps, across a variety of scales. While mipmapping textures is commonplace, accurate normal map filtering remains a challenging problem because of nonlinearities in shading¡ªwe cannot simply average nearby surface normals. In this paper, we show analytically that normal map filtering can be formalized as a spherical convolution of the normal distribution function (NDF) and the BRDF, for a large class of common BRDFs such as Lambertian, microfacet and factored measurements. This theoretical result explains many previous filtering techniques as special cases, and leads to a generalization to a broader class of measured and analytic BRDFs. Our practical algorithms leverage a significant body of previous work that has studied lighting-BRDF convolution. We show how spherical harmonics can be used to filter the NDF for Lambertian and low-frequency specular BRDFs, while spherical von Mises-Fisher distributions can be used for high-frequency materials.

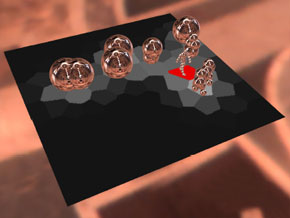

Real-Time Soft Shadows in Dynamic Scenes using Spherical Harmonic Exponentiation

Zhong Ren, Rui Wang, John Snyder, Kun Zhou, Xinguo Liu, Bo Sun, Peter-Pike Sloan, Hujun Bao, Qunsheng Peng, and Baining Guo

ACM SIGGRAPH 2006

[paper] [video: online, download]

Abstract: Previous methods for soft shadows numerically integrate over many light directions at each receiver point, testing blocker visibility in each direction. We introduce a method for real-time soft shadows in dynamic scenes illuminated by large, low-frequency light sources where such integration is impractical. Our method operates on vectors representing low-frequency visibility of blockers in the spherical harmonic basis. Blocking geometry is modeled as a set of spheres; relatively few spheres capture the low-frequency blocking effect of complicated geometry. At each receiver point, we compute the product of visibility vectors for these blocker spheres as seen from the point. Instead of computing an expensive SH product per blocker as in previous work, we perform inexpensive vector sums to accumulate the log of blocker visibility. SH exponentiation then yields the product visibility vector over all blockers. We show how the SH exponentiation required can be approximated accurately and efficiently for low-order SH, accelerating previous CPUbased methods by a factor of 10 or more, depending on blocker complexity, and allowing real-time GPU implementation.

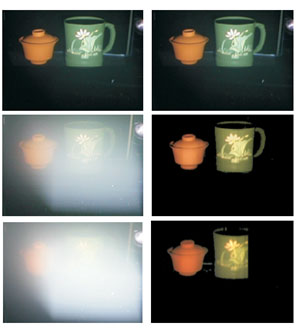

A Practical Analytic Single Scattering Model for Real-Time Rendering

Bo Sun, Ravi Ramamoorthi, Srinivasa Narasimhan, and Shree Nayar

ACM SIGGRAPH 2005

[paper] [video: online, download] [shader] [slides] [maya plugin]

Implmented in DirectX 10 Demo [video]

Abstract: We consider real-time rendering of scenes in participating media, capturing the effects of light scattering in fog, mist and haze. While a number of sophisticated approaches based on Monte Carlo and finite element simulation have been developed, those methods do not work at interactive rates. The most common real-time methods are essentially simple variants of the OpenGL fog model. While easy to use and specify, that model excludes many important qualitative effects like glows around light sources, the impact of volumetric scattering on the appearance of surfaces such as the diffusing of glossy highlights, and the appearance under complex lighting such as environment maps. In this paper, we present an alternative physically

based approach that captures these effects while maintaining real-time performance and the ease-of-use of the OpenGL fog model. Our method is based on an explicit analytic integration of the single scattering light transport equations for an isotropic point light source in a homogeneous participating medium. We can implement the model in modern programmable graphics hardware using a few small numerical lookup tables stored as texture maps. Our model can also be easily adapted to generate the appearances of materials with arbitrary BRDFs, environment map lighting, and precomputed radiance transfer methods, in the presence of participating media. Hence, our techniques can be widely used in real-time rendering applications.

Time-Varying BRDFs

Bo Sun, Kalyan Sunkavalli, Ravi Ramamoorthi, Peter Belhumeur, and Shree Nayar

IEEE Transactions on Visualization and Computer Graphics (TVCG)

Eurographics 2006 Workshop on Natural Phenomena

[paper] [video:online, download] [slides] [database]

Abstract: The properties of virtually all real-world materials change with time, causing their BRDFs to be time-varying. However, none of the existing BRDF models and databases take time variation into consideration; they represent the appearance of a material at a single time instance. In this work, we address the acquisition, analysis, modeling and rendering of a wide range of time-varying BRDFs. We have developed an acquisition system that is capable of sampling a material¡¯s BRDF at multiple time instances, with each time sample acquired within 36 seconds. We have used this acquisition system to measure the BRDFs of a wide range of time-varying phenomena which include the drying of various types of paints (watercolor, spray, and oil), the drying of wet rough surfaces (cement, plaster, and fabrics), the accumulation of dusts (household and joint compound) on surfaces, and the melting of materials (chocolate). Analytic BRDF functions are fit to these measurements and the model parameters¡¯ variations with time are analyzed. Each category exhibits interesting and sometimes non-intuitive parameter trends. These parameter trends are then used to develop analytic time-varying BRDF (TVBRDF) models. The analytic TVBRDF models enable us to apply effects such as paint drying and dust accumulation to arbitrary surfaces and novel materials.

Structured Light in Scattering Media

Srinivasa Narasimhan, Shree Nayar, Bo Sun and Sanjeev Koppal

IEEE International Conference on Computer Vision (ICCV) 2005

[paper] [video]

Abstract: Virtually all structured light methods assume that the scene and the sources are immersed in pure air and that light is neither scattered nor absorbed. Recently, however, structured lighting has found growing application in underwater and aerial imaging, where scattering effects cannot be ignored. In this paper, we present a comprehensive analysis of two representative methods - light stripe range scanning and photometric stereo - in the presence of scattering. For both methods, we derive physical models for the appearances of a surface immersed in a scattering medium. Based on these models, we present results on (a) the condition for object detectability in light striping and (b) the number of sources required for photometric stereo. In both cases, we demonstrate that while traditional methods fail when scattering is significant, our methods accurately recover the scene (depths, normals, albedos) as well as the properties of the medium. These results are in turn used to restore the appearances of scenes as if they were captured in clear air. Although we have focused on light striping and photometric stereo, our approach can also be extended to other methods such as grid coding, gated and active polarization imaging.

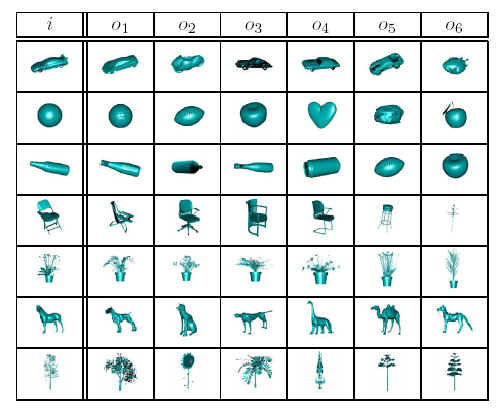

Directional Histogram Model for Three-Dimensional Shape Similarity

Xinguo Liu, Bo Sun, Sing Bing Kang, and Harry Shum

IEEE Computer Vision and Pattern Recognition (CVPR) 2003

[paper]

Abstract: In this paper, we propose a novel shape representation we call Directional Histogram Model (DHM). It captures the shape variation of an object and is invariant to scaling and rigid transforms. The DHM is computed by first extracting a directional distribution of thickness histogram signatures, which are translation invariant. We show how the extraction of the thickness histogram distribution can be accelerated using conventional graphics hardware. Orientation invariance is achieved by computing the spherical harmonic transform of this distribution. Extensive experiments show that the DHM is capable of high discrimination power and is robust to noise.

Real-Time Stereo using GPU

Bo Sun,

Technical Report, Zhejiang University, 2003

Abstract: In the area of Computer Vision, stereo vision is a fundamental problem, which has a wide range of applications. The problem of determining feature correspondences across multiple views is considered. I first combine the space-sweep approach and the novel use of commodity graphics hardware for real-time view-synthesis by implementing the method introduced in Ruigang Yang's SIGGRAPH02 paper. The method estimate a dense depth map from the current viewpoint. pixels. But this result is rather coarse and unpleasing due to the fact of occlusion and shadows. Thus I combined the simple and efficient shift window (S. B. Kang et al.) and Neighboring View Cooperate method to refine the result, which can significantly reduce noise, sharpen the edge and thus enhance accuracy.

PH.D. DISSERTATION

Analytical, Frequency and Wavelet based Mathematical Models for Real-Time Rendering

Bo Sun, Ph.D. Thesis, Columbia University, 2008

-Updated on Feb 2008-