| NSF Grant 0312963: A Robotics-Based Computational Environment to simulate the Human Hand | ||

|

Prof. Peter

Allen |

|

|

|

||

| Overview |

|

| Computer models for

studying the human hand |

|

|

Robotic hands are still a long

way from matching the grasping and manipulation capability of their

human counterparts, but computer simulation may help us understand this

disparity. We are developing a biomechanically realistic model of the

human hand, which can be used in a simulation environment to analyze

the hand from a functional point of view. Such a model would also serve

to aid clinicians planning reconstructive surgeries of a hand, and

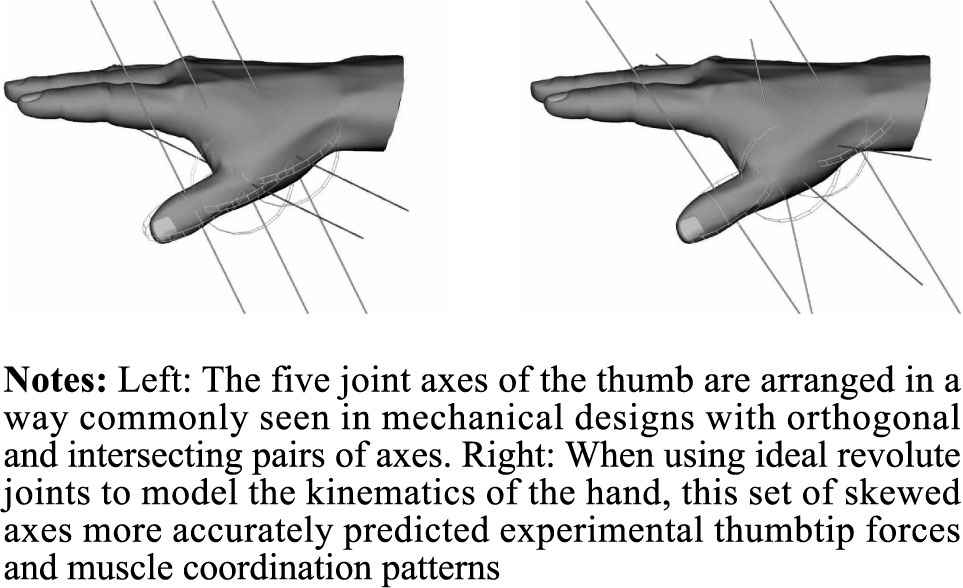

creating more effective designs for hand prostheses. In this project, we have collaborated with the Cornell Neuromuscular Biomechanics Laboratory, who has provided us with detailed anatomical and kinematic data of the human hand, which we have incorproated into some of our models.

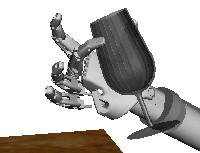

A major effort is to focus on the deformation undertaken by soft fingertips

during grasping tasks, which greatly increases the ability to create

stable grasps using subsets of fingers. Here are examples of dynamic

simulations of an anthropomorphic hand using soft fingertips for

performing manipulation tasks. In order to achieve

computational rates

needed for dynamic simulation, we are using an analytical soft finger

model that uses general expressions for non-planar contacts of elastic

bodies

to account for the local geometry and structure of the bodies in

contact. By incorporating the soft finger model and tendon driven control, we are better able to simulate a true human hand within our grasping simulator GraspIt! which is currently available for download. |

| Robotic grasping and manipulation |

|

|

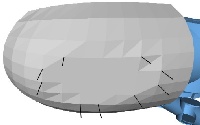

Another major focus of this project is to extend

the capabilities of our GraspIt! simulator, originally

built in the Robotics Lab at Columbia. As part of this project, we have

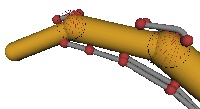

extended the grasp analysis features integrated within the GraspIt! simulator to account for soft

fingertips, as in the case of robotic hands equipped with fingers

coated in a thin layer of rubber (upper image). By using Finite Element

Analysis, the deformation of the fingerpad can be computed (bottom

image) together with the space of forces and moments that the finger

can apply at a contact. By using a finite element based method, results

are very accurate from a physical point of view, at the expense of

higher computational effort.

|

| Physical-based

dynamic simulation |

|

|

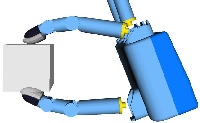

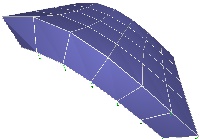

We have developed a Finite

Element Method based engine for dynamic simulation of deformable

objects (top image shows this engine used to compute the deformation of

a box-shaped object under the effect of gravity). This software can be

used as a C++ library, and also as a stand-alone application as it

includes an OpenGL-based visualization component. The engine can

currently use three simulation methods:

|

| Grasp Planning |

|

|

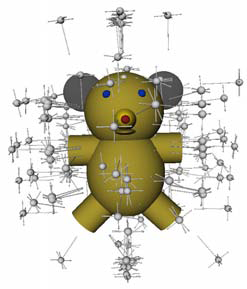

The ability to plan and execute a realizable and stable grasp on a 3-D object is crucial for many robotics applications, but many grasp planning approaches ignore the relative sizes of the robotic hand and the object being grasped or do not account for physical joint and positioning limitations. We have developed a grasp planner that can consider the full range of parameters of a real hand and an arbitrary object, including physical and material properties as well as environmental obstacles and forces, and produce an output grasp that can be immediately executed without any further computation. We do this by decomposing a 3D model into a superquadric "decomposition tree". We can then use this decomposition tree to prune the intractably large space of possible grasps into a subspace that is likely to contain many good grasps. The parameters of the grasps that lie within this subspace can then be sampled and the results evaluated in GraspIt!, to find a highly stable grasp to output. We have experimental results on a database of object models using a Barrett hand. We have also implemented an SVM classifier that can be used to reduce the number of candidate grasps. |

| Related Publications |

|