When a scene is lit by a source of light, the radiance of each point in the scene can be viewed as having two components, namely, direct and global. The direct component is due to the direct illumination of the point by the source. The global component is due to the illumination of the point by other points in the scene. It is desirable to have a method for measuring the direct and global components of a scene, as each component conveys important information about the scene that cannot be inferred from their sum. For instance, the direct component gives us the purest measurement of how the material properties of a scene point interact with the source and camera. Therefore, a method that can measure just the direct component can be immediately used to enhance a wide range of scene capture techniques that are used in computer vision and computer graphics. The global component conveys the complex optical interactions between different objects and media in the scene. It is the global component that makes photorealistic rendering a hard and computationally intensive problem. A measurement of this component could provide new insights into these interactions that in turn could aid the development of more efficient rendering algorithms.

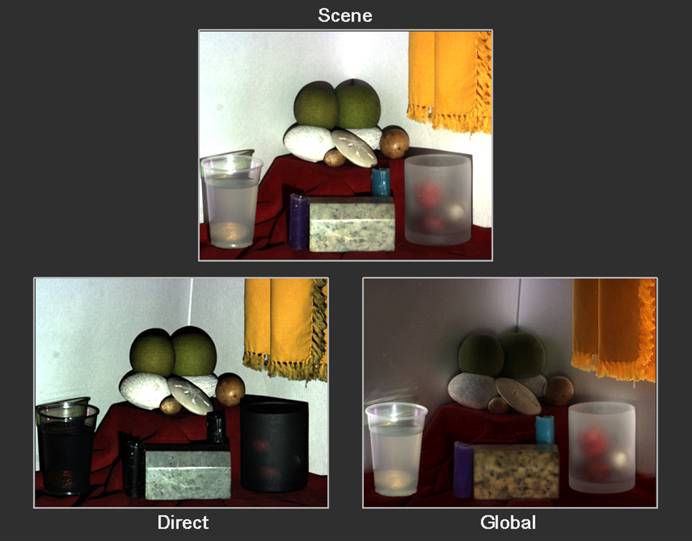

The goal of this project is to develop efficient methods for separating the direct and global components of a scene lit by a single light source and viewed by a camera. We show that the direct and global components at all scene points can be efficiently measured by using high frequency illumination patterns. This approach does not require the scattering properties of the scene points to be known. We only assume that the global contribution received by each scene point is a smooth function with respect to the frequency of the lighting. This assumption makes it possible, in theory, to do the separation by capturing just two images taken with a dense binary illumination pattern and its complement. In practice, due to the resolution limits imposed by the source and the camera, a larger set of images (25 in our setting) is used. We show separation results for several scenes that include diffuse and specular interreflections, subsurface scattering due to translucent surfaces and volumetric scattering due to dense media. We also show how the direct and global components can be used to generate new images that represent changes in the optical properties of the objects and the media in the scene.

We have developed several variants of our method that seek to minimize the number of images needed for separation. By using a sinusoid-based illumination pattern, the separation can be done using just three images taken by changing the phase of the pattern. When the resolution of the camera and the source are greater than the desired resolution of the direct and global images, the separation can be done with a single image by assuming neighboring scene points to have similar direct and global components. In the case of just a simple point light source, such as the sun, the source cannot be controlled to generate the required high frequency illumination patterns. In such cases, the shadow of a line or mesh occluder can be swept over the scene while it is captured by a video camera. The captured video can then be used to compute the direct and global components. This project was done in collaboration with Michael Grossberg at City College of New York and Ramesh Raskar at MERL.