Motion Deblurring Using Hybrid Imaging |

|

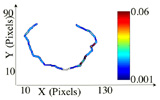

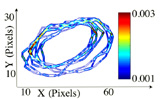

| Motion blur due to camera motion can significantly degrade the quality of an image. Since the path of the camera motion can be arbitrary, deblurring of motion blurred images is a hard problem. Previous methods to deal with this problem have included blind restoration of motion blurred images, optical correction using stabilized lenses and special CMOS sensors that limit the exposure time in the presence of motion. In this project, we exploit the fundamental trade-off between spatial resolution and temporal resolution to construct a hybrid camera that can measure its own motion during image integration. The acquired motion information is used to compute a point spread function (PSF) that represents the path of the camera during integration. This PSF is then used to deblur the image. To verify the feasibility of hybrid imaging for motion deblurring, we have implemented a prototype hybrid camera. This prototype system was evaluated in different indoor and outdoor scenes using long exposures and complex camera motion paths. The results show that, with minimal resources, hybrid imaging outperforms previous approaches to the motion blur problem. |

Publicationsprint_paperentry_bytitle: more than 2 or no entry matches. |

Pictures and Videos

|

Slides |

Related ProjectsSuper-Resolution: Jitter Camera |