Depth from Defocus |

|

|

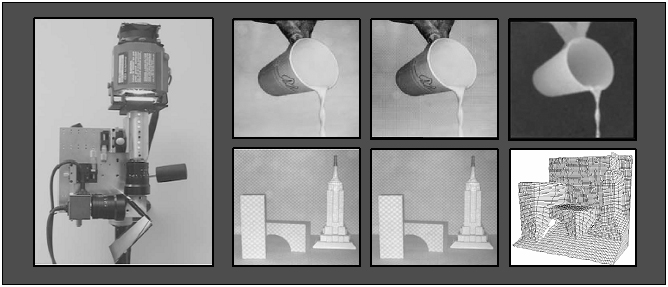

Structures of dynamic scenes can only be recovered using a real-time range sensor. Depth from defocus offers a direct solution to fast and dense range estimation. It is computationally efficient as it circumvents the correspondence problem faced by stereo and feature tracking in structure from motion. However, accurate depth estimation requires theoretical and practical solutions to a variety of problems including recovery of textureless surfaces, precise blur estimation and magnification variations caused by defocusing. In the first part of this project, both textured and textureless surfaces are recovered using an illumination pattern that is projected via the same optical path used to acquire images. The illumination pattern is optimized to ensure maximum accuracy and spatial resolution in computed depth. The relative blurring in two images is computed using a narrow-band linear operator that is designed by considering all the optical, sensing and computational elements of the depth from defocus system. Defocus invariant magnification is achieved by the use of an additional aperture in the imaging optics. A prototype focus range sensor has been developed that produces up to 512x480 depth images at 30 Hz with an accuracy better than 0.3%. Several experiments have been conducted to verify the performance of the sensor.

|

Publicationsprint_paperentry_byid: more than 2 or no entry matches. |

Videos |

Related ProjectsShape from Focus |